The movie is science fiction, but super-intelligent computers are

actively being developed today in labs around the world.

The following are some questions and answers.

- When will computers be as intelligent as human beings?

- Supercomputers will be as intelligent as humans

by around 2015, and special-purpose computer servants will be

available in the 2020s.

- What are special-purpose computer servants?

- Autonomous robots that can perform special functions. Examples

will be a Robot Plumber, a Robot Nursemaid, and a Robot Soldier.

- What will be the environmental impact?

- Every one of today's environmental policies will become obsolete.

For example, a Robot will be able to go in and clean up environmental

waste sites that we formerly thought would remain for centuries.

- Will Robots require humans to direct their activities using

wireless communications?

- Devices like that are available today. The Robots I'm talking

about will be able to perform their chores on their own, making their

own decisions about what to do next and how to do it.

- Will we be able to talk to them?

- Yes. They'll be able to listen to what you say, understand what

you say, and respond intelligently.

- Will they have all five senses?

- They'll certainly be able to hear. The early versions will have

limited ability to see, but good enough for applications like plumbing

where there is little motion. It may be a while before they're able to

smell, taste and feel what they're touching.

- Will they be able to harm or kill humans?

- Yes. In fact, Robot Soldiers will be specifically designed to

kill enemy humans.

- What about "household Robots"? Will they be able to harm or kill

people?

- Yes, at least in some cases. Some household Robots will be

designed to protect their owners in emergencies, even if that means

doing something to harm an intruder.

- Will Robots be able to set and achieve goals?

- Yes, just like humans, only faster and better.

- Will Robots be able to invent new things?

- Yes, they'll be able to invent more new things than humans

can.

- Will Robots be able to write great novels, compose great music,

or paint great paintings?

- Yes, they'll be able to do anything that humans can do, including

novel, imaginative and artistic things. The only difference is that

they'll be more intelligent and more imaginative, and they'll do it

all faster than humans.

- Can a Robot match the infinite possibilities of the human

mind?

- The human mind does not have infinite possibilities. The human

mind has many limitations, and can only think about things it knows

about, and in ways it knows about. When you figure all the possible

combinations of things that a human mind can think about, it's a very

huge number, but it's still finite. Super-intelligent robots will be

able to think about a much huger number of things, and will be able

to do it faster.

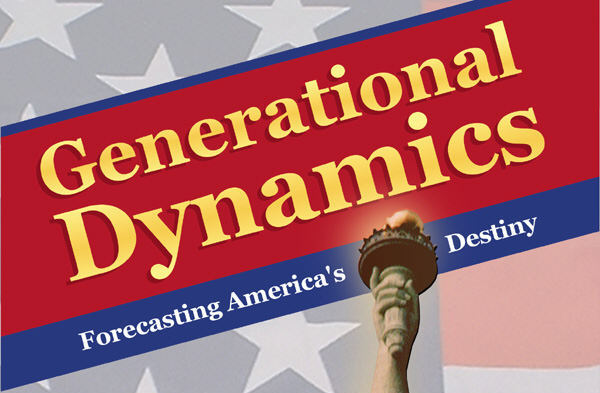

- Will I be able to tell the difference between a human and a

super-intelligent Robot?

|

|

Robots Arnold Schwarzenegger and Kristanna Loken in Terminator

|

- Well, the appearance will probably be different. But if you mean

just in terms of voice communications or written communications, then

you won't be able to tell the difference.

- Will the Robots look like they do in I, Robot? Or will

they look like Arnold Schwarzenegger (from Terminator)?

- I like to joke that a Robot Servant for a woman will look like

Arnold Schwarzenegger, and one for a man will look like Kristanna

Loken. But in fact, Robots probably won't look like them or like the

robots in I, Robot either.

As a practical matter, super-intelligent robots will probably look

something like this:

|

|

Wireless controlled robot currently used in Iraq

|

This robot is available today and it's not, of course, an intelligent

computer. The robot is controlled by wireless communications from

the laptop computer you see in the upper left hand corner, where a

human being types in commands. In the 2020s, these kinds of robots

will be autonomous intelligent computers, able to set goals, make

decisions, and talk to people.

Once you think about this design, you can easily see that you

wouldn't want even a household robot to look like the robots in the

movies. Why limit intelligent robots to two arms? You want it to be

able to have extra arms (or whatever) that can reach behind walls to

fix plumbing, for example. And why just two eyes? That arm that

reaches behind the wall can have an extra eye on it. I expect servant

robots to look very utilitarian and not look humanoid at all.

- Will Intelligent Robots be alive?

- Whether you believe they're alive probably depends on your

religious beliefs. If you believe that the world was created by God

and that life can only exist by God's creation, then the Intelligent

Robot will not be alive. But if you believe that life evolved,

starting with single-cell live organisms forming out of the so-called

primordial soup (referring to the ancient ocean, filled with strange

protein-like chemicals that themselves had evolved from simpler

chemicals), then the Intelligent Robot will be alive. It doesn't

matter either way, since "alive" is just a word.

- Will Intelligent Robots have emotions?

- Yes, if the designers decide that giving them emotions is useful,

but no, if they don't.

- Will Intelligent Robots evolve?

- Yes. Once Intelligent Robots are as intelligent as human beings

are, then they'll start designing new versions of themselves that will

be even more intelligent. Intelligent Robots will continue to get

more and more intelligent. By 2050 or so, Intelligent Robots will be

as much more intelligent than human beings as human beings are more

intelligent than dogs and cats.

- Will Intelligent Robots kill all the humans?

- No one knows what's going to happen past the year 2030, or

whether the Intelligent Robots will kill all the humans. However,

it's worth noting this: Human beings have never felt the need to kill

all the dogs and cats, so why should the Robots want to kill all the

humans?

- What is an Intelligent Advisor?

- This is an idea about how humans can coexist with

super-intelligent Robots. Each human will carry around a small

computer called an "Intelligent Advisor," that will advise the human

being what to do in various situations, thus giving a human access to

the same super-intelligence that the autonomous Robots have.

- What is the Singularity?

|

|

The Singularity

|

- There will reach a particular point in time, around 2030, when

autonomous computers will surpass human beings in intelligence and

will be able to do their own research into better versions of

themselves.

Once that happens, there will be a bend in the technology curve

(shown on the right as an exponential growth curve on a logarithmic

scale), and new technology will be invented super-exponentially

faster than was ever possible before. The bend in the curve is

called "the Singularity."

The name "The Singularity" was provided by science fiction writer and

university professor Vernor Vinge in the 1980s. For a 1993 paper by

Vernor Vinge on the Singularity, click here.

- Is 2030 a firm date for the Singularity?

- 2030 is the date that I've come to, based on my own analysis.

Some other people estimate the date to be as early as 2015-2020, and

others estimate it to be as late as 2040-2050.

- Can we control development of new, more advanced

super-intelligent computers after the Singularity?

- No. We can only control development of the first version, and

hope that we've designed it carefully enough so that later, more

advanced versions will be motivated to improve humanity.

- Does anybody know how to write the software necessary to invent a

super-intelligent Robot?

- Well, of course, once someone writes the software and designs the

first robot that's intelligent as a human being, then the robot

itself will be able to write software for improved versions of

itself.

- So, does anybody know how to write the first version of the

software?

- Yes, probably lots of people, including me.

I was challenged to do so in an online conversation, so I spent a day

doing a rough design of the first version of a super-intelligent

computer algorithm and posted it. As time went on, I started thinking

of other things and adding them to the design.

Within a few weeks after I wrote down the intelligent computer

algorithm, I was somewhat surprised to find that the whole thing was

turning into something of a mystical experience for me.

Before then, when I talked about the Singularity, it was very

abstract, as if I'd plugged some numbers into an equation and come

out with an answer without really having a good feel for why the

answer is true. Although I'm not a religious person, it was as if I

were an evangelical Christian talking about the "last days," but not

really having any firm idea about what the sequence of events through

the last days would be.

Now however, the whole thing is much more real to me. I can see how

the Singularity is going to play out. I can see what steps are going

to come first, and what steps are going to come later. I can see

where things can go wrong, and what we have to watch out for.

Most important, I can now see the possibility of a positive (for

humans) outcome from intelligent computers (ICs). I used to think

that there would be no controls whatsoever on ICs, because any

crackpot working in his basement can turn out an IC willing to kill

any and all humans.

But now I see that building the first generation of ICs is going to

be a huge project, from both the hardware and software points of

view, and that the first implementation will probably be the only

implementation for a number of years. If the first implementation

contains the appropriate safeguards, then it will be possible to get

past the it to new generations of ICs developed by ICs themselves, and

pass those safeguards on to the next generations. By the time that

ICs are as much more intelligent than humans as humans are more

intelligent than dogs and cats, the critical period will be past, and

there'll be no more need for the ICs to kill all the humans.

It'll be like the Manhattan project - one country will be the only one

that can afford to build it at first, and anyone else will have to

catch up. Just as it took several decades for nuclear weapons to

become widely available, it should be impossible for anyone else (or

at most one other country) to come up with a major implementation

before the Singularity actually occurs. That way, the Singularity can

be controlled through the critical period.

- What are some things that can go wrong?

- One thing that can go wrong was part of the plot line of the

movie Terminator III, where the Skynet computer became

"self-aware" and decided that to protect itself it needed to kill all

the humans.

In the algorithm that I designed, this outcome is a possibility if

it's not correctly designed. It comes about because the Intelligent

Computer (IC) will be able to set goals for itself, and then take

steps to achieve goals. One of the IC's goals is going to be

self-preservation. But if the software is sloppily designed, and the

goal of self-preservation has too high a priority, then the IC may

decide that the only way to guarantee self-preservation would be to

kill all the humans. This is a serious problem that has to be avoided

with very careful design, implementation and testing.

- What else can go wrong?

- There's the "zero-tolerance problem." You'll know what I mean if

you've read in the news about situations where the principal of a

school with a "zero-tolerance drug policy" decides to punish and

suspend a six year old girl who comes to school with a Tylenol

tablet, because Tylenol is a "drug."

It turns out that even the simplest set of rules can lead to

extremely astonishing results, and computers have a way of following

the rules they're programmed to follow, even when someone might think

they don't make sense. (Humans sometimes do the same thing, as the

zero-tolerance drug policy example shows.) So the intelligent

computers will need to be programmed carefully to avoid this problem.

- Can emotions help with the "zero-tolerance" problem?

- Possibly yes. There are two aspects to emotions - whether the

Robot exhibits emotions, and whether the Robot has an internal

mechanism that permits emotions to overrule its logic-based decision

making. In that case, a Robot school principal might decide to

punish the six year old girl with the Tylenol tablet, but override

its own decision with an internal "emotion system" that says, "Hey,

that's crazy. You can't do that to a six year old girl."

- If you know how to write the software, why don't you build a

super-intelligent computer today?

- The software that I designed would run too slowly on today's

computers. Within ten years or so, computers will be fast enough to

execute this algorithm at good speeds. The faster and more powerful

the computer, the more intelligent the computer, and so the computer

using the software I developed will become more and more intelligent

as time goes on.

- How do you know that computers are going to continue to get

faster and more powerful?

|

|

Supercomputers

|

- The above graph shows that supercomputers have been doubling in

power every 1.5 years (18 months). Ray Kurzweil has extended this

curve all the way back to card processing machines used in the 1890

census, and shown that the same 18 month doubling has occurred since

then, through numerous different technologies. Technology always

continues to grow exponentially at the same rate, and so it will

continue into the future.

Incidentally, growth in technology has always been limited by the

capacity and capability of the human mind. Once the Singularity has

passed, and new technology is being invented by increasingly

intelligent computers, then technological invention will speed up

substantially.

- You're talking about Moore's Law, aren't you? And isn't Moore's

Law supposed to run out soon?

- Moore's Law was was written in a paper by Gordon Moore of Intel Corp. in the early 1960s. It predicted that

the number of transistors on a computer chip would grow at an

exponential rate. History has shown that the number of transistors on

a computer chip has been doubling every 18 months or so since then,

and so the power of computers has been doubling every 18 months.

However, the density of transistors on a computer chip is going to

reach physical limits shortly after 2010. After that, different

technologies will be used to continue the same exponential growth

path in computer power. These will include biotechnology,

nanotechnology, protein folding technology, molecular technology, and

quantum technology -- under development to take the place of

integrated circuits and keep the power of computers growing

steadfastly, as history shows will happen.

- Wouldn't we be better off if we decide not to build

super-powerful computers?

- If America made such a decision, it would leave the way open for

some other country, like Japan, China, India, Russia, or Germany, to

build the first super-powerful computers.

In fact, development is already well under way around the world.

Just a few weeks ago, on May 12, the Department of Energy announced a

$25 million grant to three partners -- Cray, IBM and Silicon

Graphics, working in conjunction with the Energy Department's Oak

Ridge National Laboratory -- for the first year of a project to

develop the next generation of supercomputer by 2007.

This supercomputer's power is targeted to be at 50 teraflops -- that

is, 50 trillion calculations per second. The human brain is

generally rated at between 100 and 10,000 teraflops, so the power of

the human brain may be within reach as early as around 2010.

- Will super-intelligent computers be used for war?

- Yes. All new technologies are always used first for war, and

this will be no exception.

- Will human beings merge with computers?

- Some people think so. There are two possible ways it could

happen:

- The human brain will be "reverse engineered," and all of a

person's knowledge and consciousness will be transferred to a

separate computer.

- An ordinary human's brain will be partially replaced with a

bio-computer, so that the human will become

super-intelligent.

I tend to discount these efforts more than others do, because I'm not

sure the result, in either case, will still be a human being.

- How do you see the future?

- Here's my answer:

|

|

The Future

|

The next ten years will be very hard, as the War on Terror expands

into a Clash of Civilizations world war.

After that, the survivors will see a period of peace and prosperity,

as early Robot Servants do a lot of the work that humans have to do

today.

After the Singularity occurs, around 2030, no one has any idea what's

going to happen.